Managing servers across different environments may be complex, especially when dealing with a range of configurations, security rules, and compliance needs. Thankfully, Azure Arc provides a uniform management experience for hybrid and multi-cloud environments, allowing you to scale the deployment, administration, and governance of servers.

To further simplify configuration management, you can use Desired State Configuration (DSC) packages to define and enforce the desired state of your server infrastructure and one of the recent offering of Microsoft Azure i.e. Azure auto-manage could help you do it in an efficient way.

What is Azure Auto-manage?

Azure Auto manage is a Microsoft service that helps to simplify cloud server management. You may use Auto manage to automate routine management tasks for your virtual machines across various subscriptions and locations, such as security updates, backups, and monitoring. This keeps your servers up to current, secure, and optimized for performance without having you to spend a lot of time and effort on manual operations. You can read more about it here - https://learn.microsoft.com/en-us/azure/automanage/overview-about

Note that this blog assumes that you have a basic knowledge about Azure and Desired State Configuration (DSC) in general. If you are not familiar with these technologies, it is recommended that you brush up your skills by going through the official Microsoft documentation at https://learn.microsoft.com/en-us/azure/virtual-machines/extensions/dsc-overview. This will help you better understand the concepts and features discussed in this blog and make the most of the Azure and DSC capabilities.

This two-part blog series will center around the following processes in its first post:

- Know your pre-requisites

- Creating and compiling the DSC file

- Generating configuration package artifacts

- Validating the package locally on the authoring machine and check compliance status

- Deployment options for the package

Scenario: To keep things simple and easy to understand, this post will not create a complicated real-world scenario. Instead, it will use a simple DSC script that ensures that the server always maintains a text file on its disk drive as an example. This will be the state to maintain throughout the post. However, it's important to note that there are no restrictions on referring to this concept and extending your implementation by introducing your own logic in your PowerShell scripts or using different DSC providers, such as registry keys.

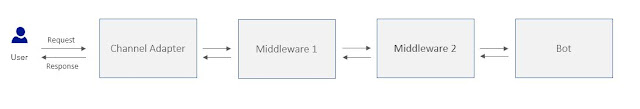

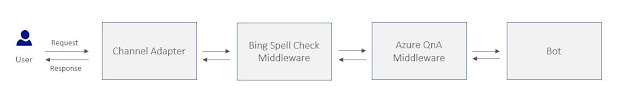

The illustration below can be used to visualize the entire implementation workflow, and the following steps will provide a detailed explanation of each.

The following example and steps have been executed and tested on a Windows operating system configuration, however you could use the Linux OS too but a few steps and command might vary.

Pre-requisites

Before proceeding with the sample script and implementing the scenario, it is crucial to configure the machine and confirm that all necessary prerequisites are installed on it. This machine is typically known as an authoring environment.

Here are some key artifacts you would need to have in the authoring environment

- Windows or Ubuntu OS

- Powershell v7.1.3 or above

- GuestConfiguration PS Module

- PSDesiredStateConfiguration Module

Create and Compile DSC script:

Given this context and scenario, let's examine what the DSC script file would resemble.

The script above imports the PSDscResources module, which is necessary for the proper compilation and generation of the .mof file as a result of running the DSC script.

I have observed that individuals with limited experience in DSC often become confused after preparing their DSC script and are uncertain about how to compile it to produce the output, which is the .MOF file.

To compile the DSC script and generate the .MOF file, you can follow these steps: Open the PowerShell console (preferably PS 7 or higher), navigate to the directory where the DSC file is saved on your local authoring environment, and then execute the .ps1 DSC file.

What is a MOF file?

The .MOF file generated after compiling DSC (Desired State Configuration) is a binary file that contains the configuration data and metadata necessary to apply the desired configuration to a target node. The MOF file is consumed by the Local Configuration Manager (LCM) on the target node to ensure that the system is configured to the desired state.

Generate configuration package artifacts:

After generating the .MOF file, the next step is to create a configuration package artifacts from it. This requires running specific commands to achieve it and as a result, the artifacts are bundled as a .zip file.

You can run the command below in your authoring environment

Please be advised that there are several command parameters that you should be familiar with. You can refer to the official documentation for a more detailed understanding of these parameters. However, the most critical parameter is the "Type" parameter, which can accept two values: "Audit" and "AuditandSet".

The value names themselves suggest the action that the LCM (Local Configuration Manager) would take once the deployment artifacts are produced. If you create the package with the "Audit" mode, the LCM will simply report the status of the machine if it deviates from the desired state. On the other hand, creating a package with the "AuditandSet" mode will ensure that the machine is brought back to the desired state according to the DSC configuration you have created.

The .zip file will be produced in the directory where your PowerShell console location is currently set. If you are interested in examining the contents of the zip file, you will find the package artifacts similar to the following.

The "modules" directory encompasses all the essential modules needed to execute the DSC configuration on the target machine once LCM is triggered. Additionally, the "metaconfig.json" file specifies the version of your package and the Type, as previously discussed in this post. The presence of the version attribute in this file indicates that you can maintain multiple versions of your packages, and these can be incremental as you continue making changes to your actual DSC configuration PowerShell files.

Validation and compliance check:

After generating the package, the subsequent step involves validating it by running it locally in the authoring environment to ensure that it can perform as expected when deployed to the target machines.

Typically, this is a two-step process where the first step involves checking the compliance of the machine, followed by running the actual remediation.

As mentioned earlier, the second command takes into account the Type parameter value present in the metaconfig.json. This implies that if the package is designed solely for auditing the status, the remediation script will not attempt to bring the machine to the desired state. Instead, it will only report it as non-compliant.

Deployment options:

Before deploying the package to your target workloads, there are a few things you should keep in mind. Firstly, the package should be easily accessible during deployment so that the deploying entity can read and access it. Secondly, you should ensure the presence of the guest configuration extension to enable guest configurations on your target Windows VMs. Additionally, make sure that the target servers have managed identities created and associated.

To ensure that the package is accessible, one option is to upload it to a central location, such as Azure Storage. You can choose to store it securely and grant access to it using shared access signatures. In the next part, we will explore how to access it during the deployment steps. Optionally, you can also choose to sign the package with your own certificate so that the target workload can verify it. However, ensure that the certificate is installed on the target server before starting the deployment of the package to it.

Regarding the second point mentioned above, i.e., ensuring that the target workloads (Azure VMs or Arc enabled servers) have their managed identities, a recommended best practice is to use Azure policy / initiative and assign it to the scope where your workloads are hosted. This policy ensures that all the prerequisites for creating the package deployment, such as performing guest assignments, are correctly met.

Here is the initiative that I have used in my environment and as you can see it contains 4 policies in total that would ensure all the requirements are met before you deploy the package.

You can also access all the guest configuration assignments through Azure portal through a dedicated blade i.e. Guest Assignments

As the Guest Configuration resource is an Azure resource, you can deploy it as an extension to new or existing virtual machines. You can even integrate it into your existing Infrastructure as Code (IaC) repository for deployment via your DevOps process, or deploy it manually. Additionally, it supports both ARM and Bicep modes of deployment.

As an example - here is what the bicep template of this resource looks like

While deploying the Guest Configuration resource manually or via DevOps can work, it's recommended to use Azure Policies to ensure proper governance in the environment. This ensures that both existing and new workloads are well-managed and monitored against the configurations defined in the DSC file. In the next post, we will discuss this in detail and leverage a custom Azure Policy definition to create the Guest Assignment resource. We will also explore the various configuration options available.

As we bid adieu to this blog post, let's remember to keep the coding flame burning and the learning spirit alive! Stay tuned for the part 2, where we shall delve deeper into the exciting world of Azure policies and custom policy definitions.